Subwoofer Specs Face Scrutiny After 40-Driver Klippel Test Project

A major car audio testing project has put the subwoofer market under pressure by showing how far some published specifications can drift from lab-measured behavior. The project, funded by ResoNix Sound Solutions founder Nick Apicella, involved about $23,000 in third-party Klippel testing across roughly 40 subwoofers. Public results available so far cover 21 drivers, all measured under the same standard.

Practical verdict: the data is useful for anyone buying a subwoofer, but it should not be read like a simple winner list. It exposes real weaknesses in published Xmax and power claims, yet the company publishing the leaderboard is also selling prototype subwoofers that rank at the top. Buyers should treat the measurements as valuable technical evidence and the commercial context as part of the story.

Why Subwoofer Specs Became So Easy to Misread

The controversy starts with one of the most quoted numbers in car audio: Xmax. In simple terms, Xmax is supposed to describe how far a subwoofer cone can move while still producing usable output. Buyers often use it as a shortcut for performance. More Xmax looks like deeper bass, louder output and better value.

The problem is that Xmax has not always been measured or published under one universal standard. A brand can present a number based on one assumption, while another brand uses a different method. Both may appear defensible on paper, but the buyer ends up comparing figures that do not mean the same thing.

The source example from Adire Audio makes the issue clear. The same Shiva driver produced very different Xmax numbers depending on the method used: 3.7mm under IEC-62458, 14.7mm under a 10% THD method, and more than 16mm under a 50% BL/compliance threshold. That is not a small difference. It is the difference between a modest driver and a driver that appears far more capable on a spec sheet.

For a customer, this creates a trap. The number looks objective, but the method behind it may not be visible. A subwoofer can advertise impressive excursion and still lose clean control before it reaches that point.

What Klippel Testing Changes

Klippel testing matters because it looks beyond simple small-signal specifications. Traditional Thiele-Small parameters describe a driver near rest, where behavior is mostly linear. That is useful, but a subwoofer does not live near rest when it is reproducing hard bass in a real car system. It moves, heats, compresses, distorts and reaches mechanical or electrical limits.

The Klippel Large Signal Identification module measures what happens as the driver moves through real operating stroke. Instead of asking only how far the cone can move, it asks how far the cone can move before the driver stops behaving cleanly.

Three curves become especially important:

- BL(x) shows how motor force changes as the voice coil moves through the magnetic gap.

- Kms(x) shows how the suspension stiffens or relaxes across travel.

- Le(x) shows how voice coil inductance changes with cone position.

Those curves reveal limits that a normal spec sheet can hide. A driver may physically reach an advertised excursion number but become too nonlinear to produce clean bass there. That distinction is central to the ResoNix dataset.

The project uses the industry-accepted 70% BL drop-off reference for Xmax and a 17% Le variance threshold for practical clean output. In plain language, one number tracks motor-force loss, while the other catches inductance-related distortion that may appear before the motor limit is reached.

What the Public Leaderboard Shows

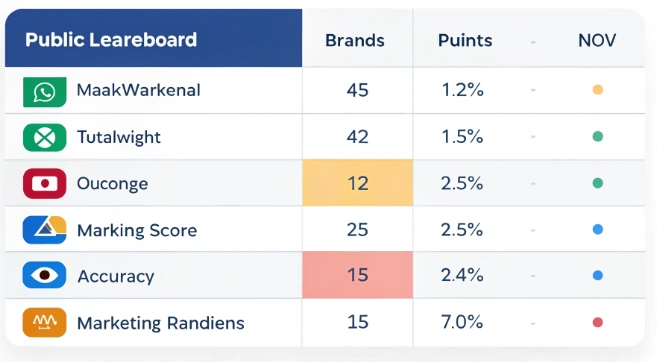

The larger project reportedly covers about 40 subwoofers, while 21 driver results were public at the time of the source material. Those published results were graded across 14 weighted categories totaling 1,250 points. A separate 0-to-100 marketing accuracy score compared each brand’s published claims against the Klippel measurements.

The spread was wide. Some drivers scored above 900 points, while others landed barely above 200. Nine of the 21 public drivers received marketing accuracy scores below 50, which suggests that the gap between published claims and measured behavior was not rare.

The most damaging cases were not minor disagreements over definitions. Some results suggested that the driver design did not support the advertised number cleanly.

Wavtech’s thinPRO12 was one of the clearest examples. It claimed 20mm of Xmax but measured 8.85mm, giving it the largest raw excursion shortfall in the dataset and a marketing accuracy score of 15 out of 100. The issue appeared to involve a mismatch between the coil height and the driver’s split-gap geometry, not merely a wording dispute.

Stereo Integrity’s BM-11 performed worse in the composite ranking. It finished last at 225 out of 1,250 and scored 5 out of 100 for marketing accuracy. The driver claimed 14mm of Xmax and measured 9.31mm. The test also found that the suspension was biased outward by about 6mm, contributing to roughly 45% total harmonic distortion at 25Hz when tested at 17 volts.

Stereo Integrity’s SQL 10 added another problem: thermal behavior. Its voice coil triggered Klippel thermal protection after five minutes at roughly 480 watts, about half of its 1,000-watt rating. That matters because power claims influence amplifier matching, system design and buyer expectations.

The Difference Between Advertised Xmax and Clean Output

One of the most useful parts of the project is that it separates two ideas buyers often merge into one: measured excursion and practical clean stroke. A subwoofer can meet a BL-based Xmax claim and still become limited by inductance before that point.

This is where Le matters. If voice coil inductance changes too much with cone position, distortion can rise even when the motor-force number looks acceptable. The cone may still move. The bass may still be loud. But clean output is already compromised.

Several drivers showed this split clearly. Audiofrog’s GB12D4 reached 87% of its 19mm BL claim, but its practical clean stroke measured only 5.68mm, about 30% of the published figure. Focal’s SUB 10WM Utopia M exceeded its BL claim, measuring 20.50mm against a 17mm spec, yet its clean-output ceiling was 5.64mm, roughly 33% of the advertised number. JL Audio’s 12W3v3-4 met its 13mm BL claim at 13.81mm, but clean stroke topped out at 5.38mm.

JL Audio’s 12W7AE-3 showed the same pattern at a higher performance tier. It exceeded its 29mm BL claim by reaching 34.1mm, but practical output was inductance-limited to 18.77mm, about 65% of the published spec.

For buyers, this is the key lesson. A subwoofer spec can be technically true under one measurement method and still fail to describe clean usable behavior in a system. Clean bass is not just movement. It is controlled movement.

Which Brands Looked Better in the Data

The dataset did not only expose weak claims. It also showed that some manufacturers published conservative or unusually accurate numbers.

Dynaudio’s Esotar2 1200 stood out because it was under-rated. The company claimed 10.25mm, while the lab measured 14.37mm. That means the driver exceeded its published claim by about 40%. Its distortion also stayed below 5% from 20Hz to 120Hz, helping it reach 904 out of 1,250.

Acoustic Elegance’s SBP 15, tested with the Apollo motor upgrade, performed even better in the non-ResoNix group. It measured 118% of its published Xmax claim and showed near-perfect BL symmetry. Its 983 out of 1,250 score made it the highest-ranked independent driver in the available database.

Audiomobile’s Encore 4412 was notable for precision. It measured 19.11mm against a 19.1mm claim, leaving almost no gap between marketing and lab result. It also earned a perfect 100 marketing accuracy score, one of only two non-ResoNix drivers to do so.

These results are important because they show the test was not simply a takedown of the category. Some brands came out looking careful, conservative or technically honest. The problem is not that all subwoofer specs are useless. The problem is that without a common measurement method, buyers cannot easily tell which specs are meaningful.

The Conflict Behind the Leaderboard

The strongest criticism of the ResoNix project is not the existence of the data. It is the structure around the data.

ResoNix’s own prototype subwoofers ranked in the top three spots. The GUS-15 prototype scored 1,077 out of 1,250, the GUS-12 scored 1,068, and the GUS-10 scored 996. The best non-ResoNix driver, Acoustic Elegance’s SBP 15, scored 983.

That creates an unavoidable conflict. The company funding and publishing the leaderboard is also selling the products that occupy the top positions. Those GUS models were listed for pre-order at $725 to $1,150, with delivery planned for early July 2026.

The presentation style adds to the tension. Competitor pages include detailed editorial breakdowns, marketing accuracy scores and sometimes blunt recommendations against purchase. ResoNix’s own prototype pages carry shorter disclaimers rather than the same full teardown format.

Measurement was reportedly performed by an unnamed third-party Klippel-trained engineering lab. ResoNix says the lab was kept anonymous to avoid industry drama. That explanation may be understandable, but it limits outside verification. Readers cannot directly check the lab arrangement beyond ResoNix’s own description.

Apicella’s Defense and the Industry’s Silence

Apicella’s defense rests on method. He says the same third-party lab measured all drivers, the scoring used formulas, visual layer-stacking reduced subjective judgment, and the same standard applied to both competitors and GUS prototypes. ResoNix has also said it will publish full Klippel data for GUS production batches and allow manufacturers to request retesting.

That is a stronger defense than simply asking people to trust the brand. Still, it does not remove the central issue: the leaderboard is controlled by a company with products in the race.

The industry response has been limited. According to the source, public searches for responses from brands including JL Audio, Sundown Audio, Audiofrog, Stereo Integrity, Adire Audio, Focal and Wavtech returned no on-record rebuttals as of May 2026.

The most meaningful public criticism came from RAWCAtOr, a YouTube reviewer who owns the worst-scoring Stereo Integrity BM-11. He accepted the measurements but challenged the interpretation, arguing that the scoring grades drivers against an idealized subwoofer rather than fully accounting for real-world use cases. His point is fair: a driver can perform poorly in one scoring system and still serve a narrow installation goal.

That does not erase the value of the data. It means the data should be read as engineering evidence, not as a universal buying command.

What Subwoofer Buyers Should Take From This

The practical lesson is not to stop trusting every brand. It is to stop reading one specification as the full truth. Xmax, power handling, sensitivity and frequency response all need context. A large excursion number is only useful if the driver can stay controlled, manage heat and avoid severe distortion at that operating range.

A buyer comparing subwoofers should look for:

- the measurement method behind Xmax;

- whether clean stroke is limited by BL, suspension or inductance;

- independent distortion data at low frequencies;

- realistic power testing, not only peak claims;

- enclosure requirements and intended use case;

- whether the brand publishes enough data to verify its claims.

A daily sound-quality build, a high-output SPL build and a shallow stealth installation do not need the same driver. That is why a leaderboard can guide decisions but should not replace system planning.

Why Independent Car Audio Testing Still Matters

Home audio already has a stronger independent measurement culture. Erin Hardison’s Erin’s Audio Corner is an example of Klippel-based testing without a speaker product line attached. That structure gives readers fewer conflicts to sort through.

Car audio does not yet have an equivalent at the same visibility level. ResoNix has created one of the most detailed public Klippel datasets for car audio subwoofers, but it has done so as a company entering the same product category it is measuring.

That leaves the market in an uncomfortable but useful position. The data exists. The conflict exists. Brands with the technical ability to respond have not published equivalent public measurements. Until they do, buyers should treat the ResoNix project as both a valuable dataset and a reminder that transparency works best when no one is selling the winner.

The next step for the industry is clear: more public Klippel data, more consistent standards and more manufacturers willing to show how their subwoofers behave beyond the spec sheet. Until then, the smartest buyer will read advertised numbers with caution and ask one simple question before trusting any subwoofer claim: measured by which method, and clean up to what limit?